The number of network science applications across many different fields has been rapidly increasing. Surprisingly, the development of theory and domain-specific applications often occur in isolation, risking an effective disconnect between theoretical and methodological advances and the way network science is employed in practice. .

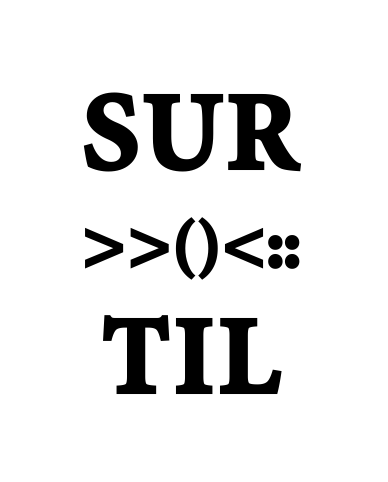

In this work, we will focus on three intimately related factors that are often overlooked as a consequence of the aforementioned gap between theory and practice in network science:

(1) The obscured quality of the data.

(2) The choice of representation.

(3) The suitability of the methods.

Here we address this risk constructively, discussing good practices to guarantee more successful applications and reproducible results. We endorse designing statistically grounded methodologies to address challenges in network science. This approach allows one to explain observational data in terms of generative models, naturally deal with intrinsic uncertainties, and strengthen the link between theory and applications

A network of interactions A that gives as a result some kind of observational data D should not in general be conflated with the data itself. Instead, we need to recognize that the data D is the result of measurement process P(D∣A) that is conditioned on the unseen network, but is to some extent unavoidably decoupled from it.

In order to estimate the underlying network, we need to perform an inferential step P(A∣D), which needs to include our modeling assumptions about how the network and the data are generated. The resulting estimate Â.

will have an uncertainty that reflects the experimental design, accuracy of the measurements and overall feasibility of the particular reconstruction problem.

We can identify a succinct set of best practices for the next advances of network science and its applications to domain-specific challenges.

First, we must understand the provenance of network data and make explicit, via a generative model, the underlying abstraction that one wishes to extract from it. Ideally, our chosen abstraction should not depend on an arbitrary choice of spatial and/or temporal granularity, or we should at least demonstrate that the resulting analysis is not sensitive to this choice.

Second, we must incorporate, both theoretically and in practice, the presence and the effects of errors and incompleteness in network measurements.

Third, when developing a new analytical approach, we should validate it on synthetic data to guarantee that expected outcomes are found. If the validity of a method cannot be demonstrated on controlled synthetic experiments, results obtained when it is applied to empirical data have little value.

There are subtle issues that can emerge when rigorous methodologies are not employed more generally.

The network science community is characterized by the heterogeneous background of its members — one of our community’s strengths. However, such heterogeneity also means that many of the above points, as a whole, are not universally recognized, and therefore require emphasis. For instance, methodological flaws that are easily identified within the boundaries of a specific discipline can inevitably lead to controversial results even when applied to the same data, within the wider boundaries of network science.

Therefore, it is necessary to move beyond the particular languages and practices of individual disciplines and positively cross-pollinate to obtain a shared standard of best practices across our multidisciplinary field.