“A generic self-learning emotional framework for machines”

In nature, intelligent living beings have developed emotions to modulate their behavior as a fundamental evolutionary advantage. However, researchers seeking to endow machines with this advantage lack a clear theory from cognitive neuroscience describing emotional elicitation from first principles, namely, from raw observations to specific affects. As a result, they often rely on case-specific solutions and arbitrary or hard-coded models that fail to generalize well to other agents and tasks. Here we propose that emotions correspond to distinct temporal patterns perceived in crucial values for living beings in their environment (like recent rewards, expected future rewards or anticipated world states) and introduce a fully self-learning emotional framework for Artificial Intelligence agents convincingly associating them with documented natural emotions. Applied in a case study, an artificial neural network trained on unlabeled agent’s experiences successfully learned and identified eight basic emotional patterns that are situationally coherent and reproduce natural emotional dynamics. Validation through an emotional attribution survey, where human observers rated their pleasure-arousal-dominance dimensions, showed high statistical agreement, distinguishability, and strong alignment with experimental psychology accounts. We believe that the framework’s generality and cross-disciplinary language defined, grounded on first principles from Reinforcement Learning, may lay the foundations for further research and applications, leading us toward emotional machines that think and act more like us.

Key factors that play a role in the emotional phenomena and guide our research include:

- Perception: Rooted in neuroscience, perception is the initial stage of cognition, where sensory neurons capture external stimuli (like retinal photoreceptors or pain nociceptors). In combination with past experiences, perceptions are processed into increasingly abstract concepts, structuring a perceived reality into an internal representation, crucial for emotional phenomena.

- Reward and pain signals: Emotions are linked to the limbic system, including structures like the hypothalamus and amygdala. The reward circuit and pain signals, forming the ‘common neural currency’ guiding animal behavior, are fundamental to emotional experiences. These subjective signals, our referential scale for what feels ‘good’ or ‘bad’, are shaped by individual homeostatic dynamics, maintaining the organism’s internal equilibrium.

- Retrospection: Past experiences heavily influence the emotional state, strongly correlated with recent outcomes and with the perceived sign of trend changes. Additionally, repeated exposure to a reinforcing stimulus leads to ‘habituation’, while neutral exposures result in ‘extinction’.

- Anticipation: Essential for their survival, animals predict future rewards using perception and memories, encoded in human neurons within the basal ganglia, midbrain, parietal, and cortex, linked to dopamine-producing neurons. Dopamine, key in reward prediction learning, is widespread in animal phyla, with octopamine as its counterpart in Arthropoda.

- Knowledgeability: Some emotions may be associated with cognitive representations of the environment (such as surprise or curiosity), or with beneficial or harmful elements based on an individual’s subjective reality.

- Feedback mechanism: Lastly, emotions can act as signals, communicating internal states to the external environment and influencing interactions-such as fear or anger, signalling threats to others and prompting specific responses. Internally, emotions can also guide behavior adjustments, maintaining balance and achieving goals.

- Cognitive gradation: All these factors engage specific brain regions and cognitive abilities, unique to each species, suggesting a progression or genealogy of emotions, as described in biology.

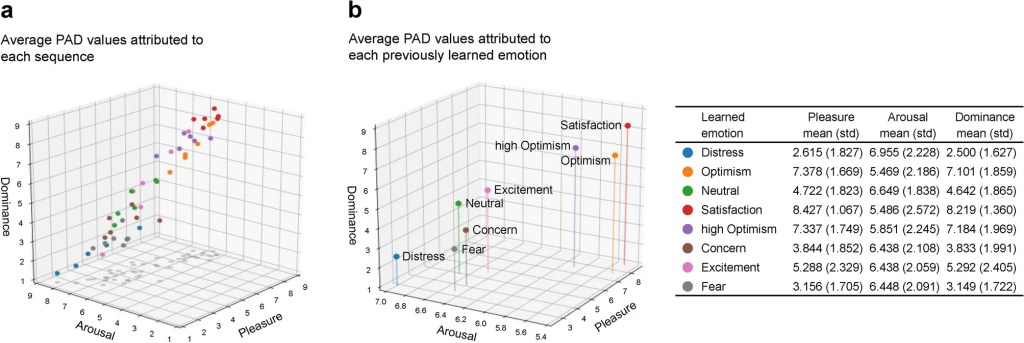

(a), PAD attributed to each sequence. Unaware of the emotions previously attributed to the sequences, the participants consistently rated samples of the same class with similar PAD values, as illustrated by the color mapping.

(b), Resulting PAD attribution to each emotion. The average PAD values of the videos associated with each emotion show a meaningful and coherent progression along the axes.

Learned emotion – Top PAD matches

Distress – Helpless, Scared, Nervous, Fearful

Optimism – Capable, Optimism, Masterful, Skilled

Neutral/Slight Concern – Anxious, Startled, Troubled, Intense

Satisfaction – Proud, Confident, Safe, Achievement

High Optimism – Capable, Brave, Confident, Strong, Pride (feeling)

Concern – Confused, Nervous, Moody, Suspicious, Startle

Excitement – Aroused, Startled, Power, Anxious, Impulse

Fear – Insecure, Nervous, Thrill, Fearful, Fright

We have introduced here a universal emotional language learned from first principles in reinforcement learning and inspired by primary cognitive variables like reward/punishment signals and predicted future values.

The supporting theoretical framework and demonstrated methodology are, to the best of our knowledge, the first successful attempt to formally describe and synthesize a full range of recognizable, functional emotions of comparable characteristics to those of living beings.

This pioneering work not only bridges the gap between artificial and natural emotional processes, but also opens new avenues for exploring the intersection of cognition, emotion, and machine learning. We believe that the generality of our framework could lay a foundation upon which further research and applications will lead us toward emotional machines that think and act more like us.