“Observational learning of exploration-exploitation strategies in bandit tasks”

In decision-making scenarios, individuals often face the challenge of balancing between exploring new options and exploiting known ones—a dynamic known as the exploration-exploitation trade-off.

In such situations, people frequently have the opportunity to observe others’ actions. Yet little is known about when, how, and from whom individuals use observational learning in the exploration-exploitation dilemma.

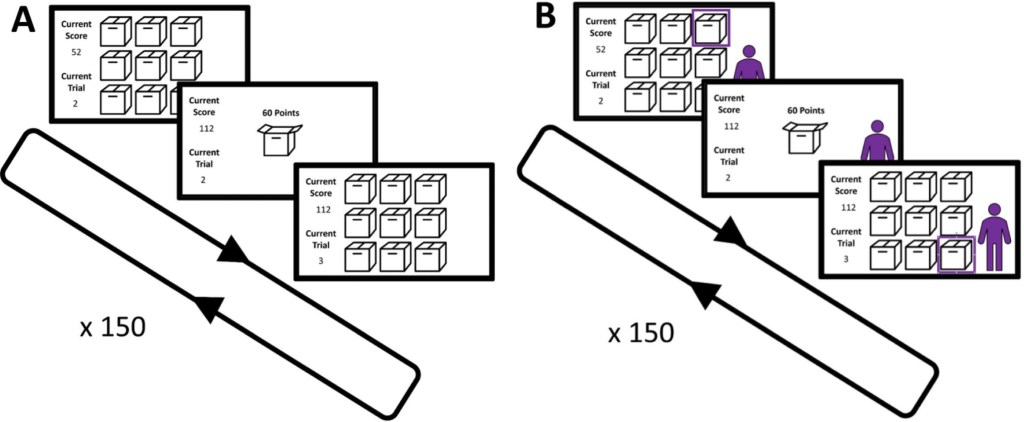

In two experiments, participants completed multiple nine-armed bandit tasks, either independently or while observing a fictitious agent using either an explorative or equally successful exploitative strategy.

To analyze participants’ behaviors, we used a reinforcement learning model (simplified Kalman Filter) to extract parameters for both copying and exploration at the individual level.

Results showed that participants copied the observed agents’ choices by adding a bonus to the individually estimated value of the observed action. While most participants appear to use an unconditional copying approach, a subset of participants adopted a copy-when-uncertain approach, that is copying more when uncertain about the optimal action based on their individually acquired knowledge. Further, participants adjusted their exploration strategies in alignment with those observed. We discuss, in how far this can be understood as a form of emulation. Results on participants’ preferences to copy from explorative versus exploitative agents are ambiguous. Contrary to expectations, similarity or dissimilarity between participants’ and agents’ exploration tendencies had no impact on observational learning. These results shed light on humans’ processing of social and non-social information in exploration scenarios and conditions of observational learning.

“Social learning and exploration–exploitation dilemma in decision-making”

This mini review examines the neurocomputational principles of social learning through the lens of the exploration–exploitation dilemma.

While the neural mechanisms of learning from others—mediated by distinct signals in the ventromedial and lateral prefrontal cortices—are well established, less is known about how these mechanisms interact with the fundamental trade-off between gathering information (“exploration”) and maximizing rewards (“exploitation”). We discuss how social environments shape this trade-off, leading to strategic behaviors such as informational free-riding or conformity.

A central focus of this review is the issue of source selection: how agents decide whom to observe. We present recent evidence suggesting that, contrary to the predictions of optimal information-seeking theories, humans often exhibit a “reliability-seeking” bias, preferring to learn from consistent, exploitation-oriented partners rather than highly exploratory ones.

We conclude by discussing the limitations of current paradigms, specifically the inherent confounding of social cues such as competence and predictability, and outline a computational framework for isolating the specific drivers of adaptive social decision-making.

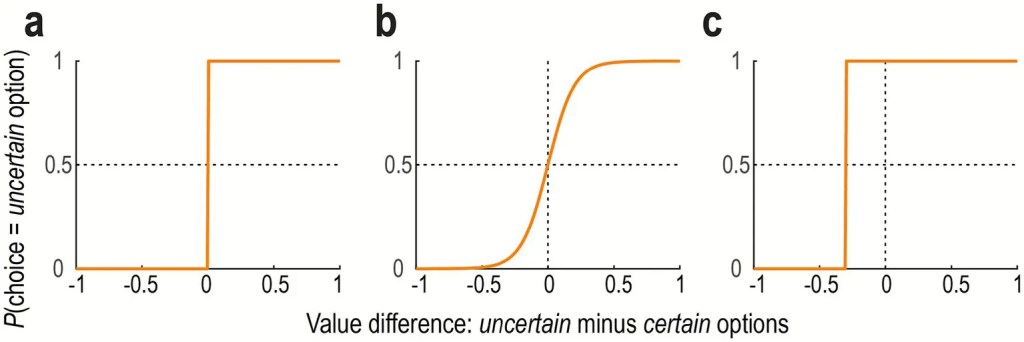

(a) No exploration. The probability of choosing the uncertain option over the certain option is plotted as a function of the value difference. The agent always chooses the option with the higher estimated value (i.e., deterministic).

(b) Random exploration. The agent generally favors the option with the higher value, but with added stochasticity (noise). Consequently, the lower-value option is occasionally chosen by chance.

(c) Directed exploration. The agent considers the uncertainty of the value estimation. The agent is more likely to choose the uncertain option, even if its expected value is lower, to gain information.

Social learning strategies—including the critical decision of from whom to learn—are modulated by partner characteristics such as the exploration–exploitation balance, decision quality, predictability, group membership, and social status.

However, a significant open question remains: which specific partner attributes drive these strategic adjustments?

In naturalistic settings, these characteristics are often empirically intertwined.

For example, a lower level of random exploration (i.e., reduced noise) typically correlates with higher decision quality, greater predictability, and often prestige or majority status.

Similarly, in-group membership frequently covaries with predictability, as agents possess richer prior knowledge about their own group’s norms. Consequently, it is difficult to determine whether observed biases reflect sensitivity to competence, predictability, social identity, or a combination thereof, while a supplementary analysis in our study suggests that competence contributes more than predictability.

Furthermore, few studies in the social learning literature to date have carefully distinguished between random and directed exploration.

How people learn from others shapes not only individual choices but also the collective ability to adapt intelligently to changing environments.

This study highlights two fundamental mechanisms of social learning—one that promotes rapid convergence at the cost of flexibility, and another that enhances flexibility but slows coordination.

Using computational modeling and simulations, we show that these mechanisms, while limited in isolation, can complement each other when combined.

Their coexistence—an evolutionarily stable outcome—strikes a balance between efficiency and adaptability in collective decision-making under uncertainty. These findings are particularly relevant to modern societies, where the potential for maladaptive herding is rapidly increasing, and they point to design principles for fostering resilient decision systems in human and AI collectives.

Balancing efficiency and flexibility in collective decision-making is increasingly critical in modern societies characterized by rapid sociocultural and technological change.

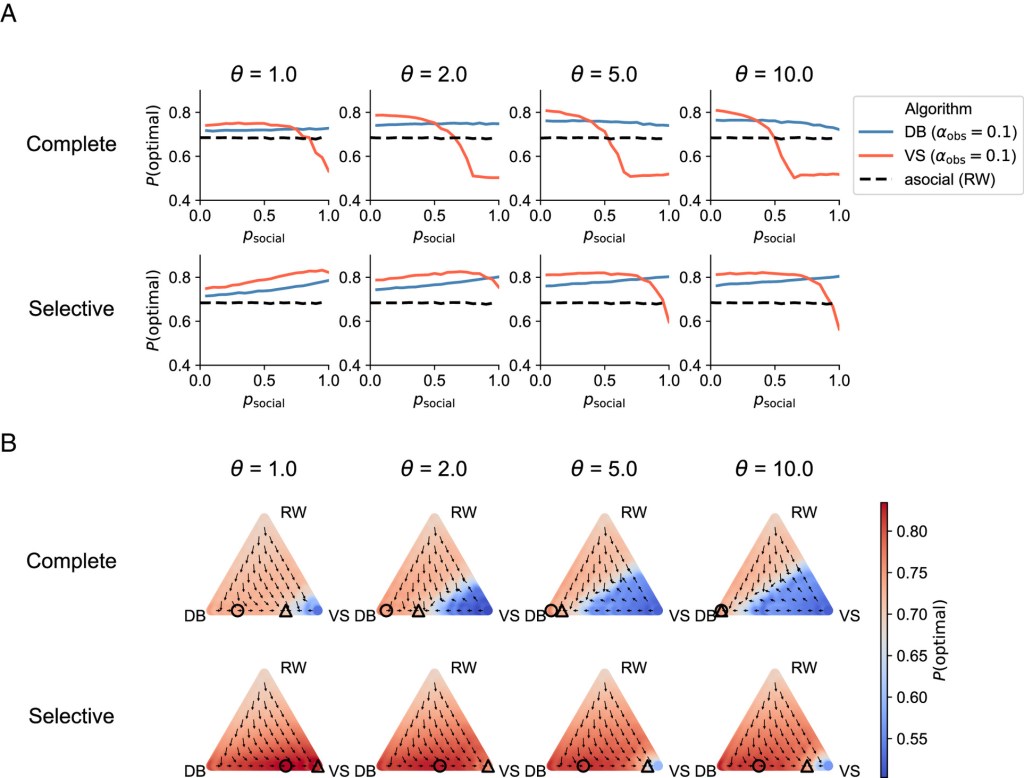

Recent research in cognitive neuroscience has proposed two contrasting computational algorithms for social learning: value shaping (VS) and decision biasing (DB). VS posits that others’ choices serve as “pseudo-rewards” that directly shape an observer’s valuations, leading them to prefer popular options even in the absence of outcome feedback. In contrast, DB confines the influence of social information to behavior—observers may imitate popular actions, but they update their valuations solely through personal experience. Although both algorithms facilitate individual adaptation under uncertainty, their interactive dynamics and group-level consequences remain largely unexplored.

To address this gap, we developed computational models of VS and DB within a reinforcement learning framework and conducted agent-based simulations to examine collective performance in uncertain and dynamically changing environments. The results reveal a trade-off: VS enables rapid convergence and high efficiency in stable contexts, whereas DB promotes greater adaptability under environmental volatility. These differences are amplified in larger groups, particularly under strong majority influence. Importantly, evolutionary analyses indicate that both learning types can coexist stably, allowing their complementary strengths to enhance group performance.

Together, our findings provide a computational and evolutionary account of how social learning can both enhance and impair collective intelligence—and suggest design principles for fostering resilient collective decision systems in human and AI societies facing rapid change.

(B) Group-level performance as a function of group composition. The proportions of DB, VS, and asocial (RW) agents were varied in 5% increments, and the average performance for each type was calculated. The heatmap displays overall collective performance (averaged across all agent types), with warmer colors indicating higher values. The vector field illustrates the evolutionary dynamics, representing likely trajectories of group composition over time. The circle roughly marks the composition that yields the highest group performance, while the triangle indicates the evolutionarily stable equilibrium. See Materials and Methods for details.