There is a great Quanta article on Melanie Mitchell, discussing her effort to include analogy into AI. Some quotes:

“Today’s state-of-the-art neural networks are very good at certain tasks, but they’re very bad at taking what they’ve learned in one kind of situation and transferring it to another” — the essence of analogy.

“Analogy isn’t just something humans do. Some animal species are kind of robotic, but others take prior experiences and map them onto new experiences. Maybe it’s one way to put a spectrum of intelligence onto different living systems: To what extent can you make analogies?”

“You can show a deep neuralnetwork millions of pictures of bridges and it can probably recognize a new picture of a bridge over a river or something. But it can never abstract the notion of ‘bridge’ to, say, our concept of bridging the gender gap.”

“Some people say being able to predict the future is what’s key for AI, or common sense, or the ability to retrieve memories useful in a current situation. But in each of these things, analogy is central.”

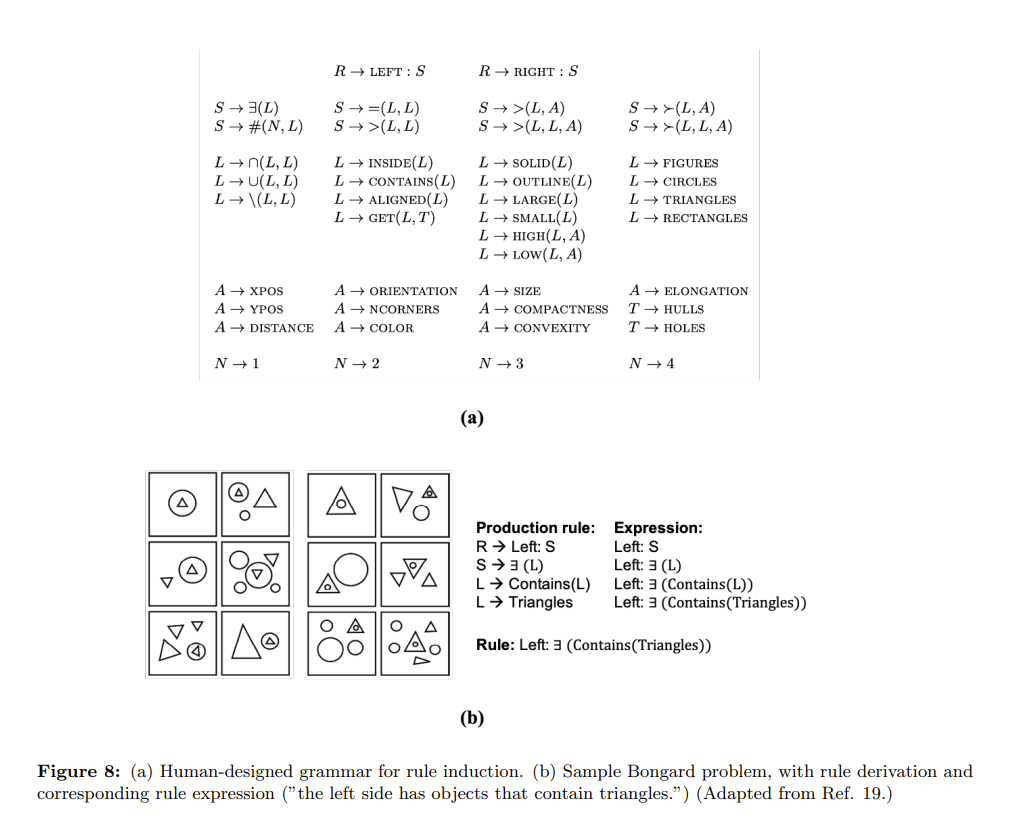

More technical details can be found in her recent paper: “Abstraction and Analogy-Making in Artificial Intelligence”.

Conceptual abstraction and analogy-making are key abilities underlying humans’ abilities to learn, reason, and robustly adapt their knowledge to new domains. Despite of a long history of research on constructing AI systems with these abilities, no current AI system is anywhere close to a capability of forming humanlike abstractions or analogies. This paper reviews the advantages and limitations of several approaches toward this goal, including symbolic methods, deep learning, and probabilistic program induction. The paper concludes with several proposals for designing challenge tasks and evaluation measures in order to make quantifiable and generalizable progress in this area.