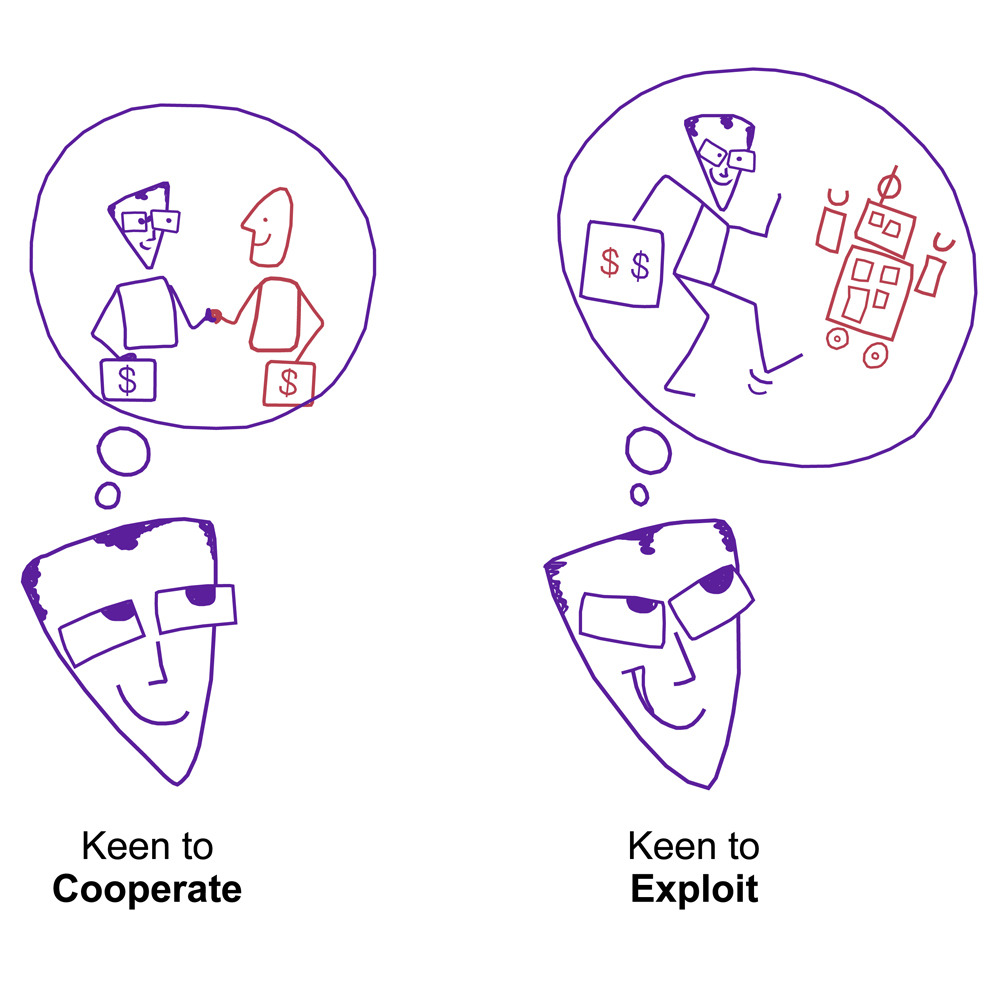

Algorithm exploitation: Humans are keen to exploit benevolent AI:

- People predict that AI agents will be as benevolent (cooperative) as humans

- People cooperate less with benevolent AI agents than with benevolent humans

- Reduced cooperation only occurs if it serves people’s selfish interests

- People feel guilty when they exploit humans but not when they exploit AI agents

We cooperate with other people despite the risk of being exploited or hurt.

If future artificial intelligence (AI) systems are benevolent and cooperative toward us, what will we do in return? Our cooperative dispositions are weaker when we interact with AI.

Contrary to the hypothesis that people mistrust algorithms, participants trusted their AI partners to be as cooperative as humans. However, they did not return AI’s

benevolence as much and exploited the AI more than humans.

These findings warn that future self-driving cars or co-working robots, whose success depends on humans’ returning their cooperativeness, run the risk of being exploited. This vulnerability calls not just for smarter machines but also better human centered policies.