Evolution of Brains and Computers: The Roads Not Taken – Can machines ever achieve true intelligence? , is a perspective article in entropy by Ricard Solé and Luís F. Seoane, has a great discussion on intelligence.

When computers started to become a dominant part of technology around the 1950s, fundamental questions about reliable designs and robustness were of great relevance. Their development gave rise to the exploration of new questions, such as what made brains reliable (since neurons can die) and how computers could get inspiration from neural systems.

In parallel, the first artificial neural networks came to life. Since then, the comparative view between brains and computers has been developed in new, sometimes unexpected directions. With the rise of deep learning and the development of connectomics, an evolutionary look at how both hardware and neural complexity have evolved or designed is required. In this paper, we argue that important similarities have resulted both from convergent evolution (the inevitable outcome of architectural constraints) and inspiration of hardware and software principles guided by toy pictures of neurobiology. Moreover, dissimilarities and gaps originate from the lack of major innovations that have paved the way to biological computing (including brains) that are completely absent within the artificial domain.

As it occurs within synthetic biocomputation, we can also ask whether alternative minds can emerge from A.I. designs. Here, we take an evolutionary view of the problem and discuss the remarkable convergences between living and artificial designs and what are the pre-conditions to achieve artificial intelligence.

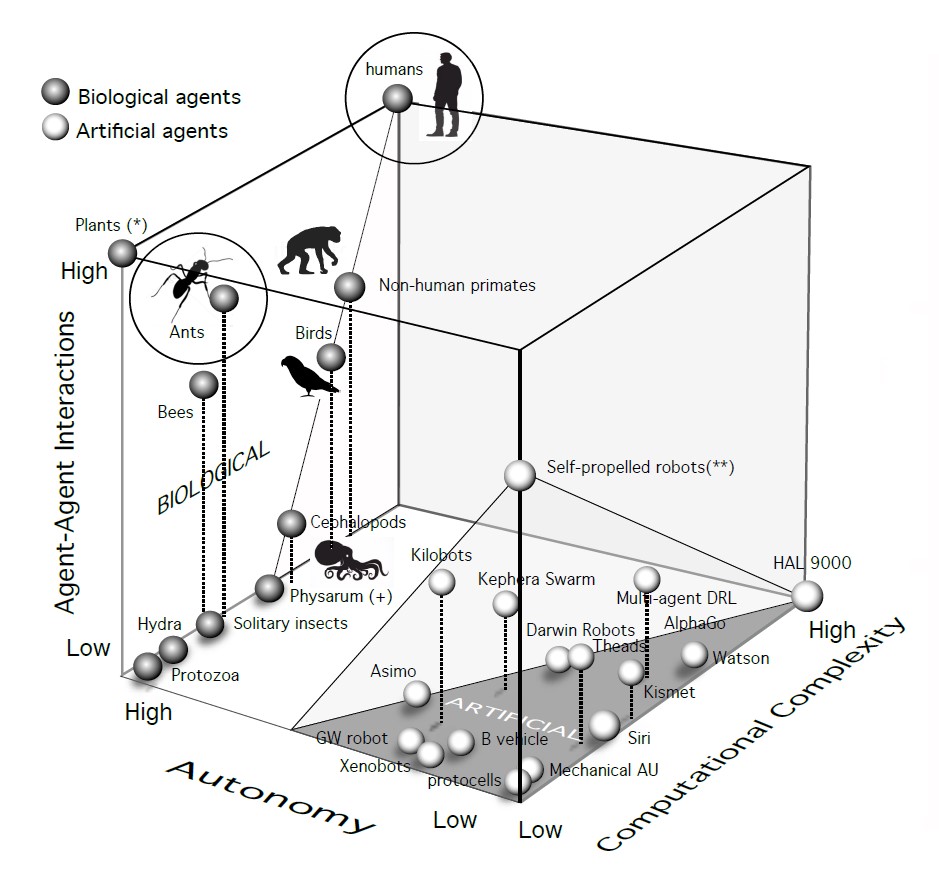

Plants (*) are located in the upper left corner since ecological interactions are known to play a key role, some of them by means of chemical communication exchanges.

Current A.I. implementations cluster together in the high-computation and low-social complexity regime, with variable degrees of interaction-based rules.

The boundaries of this artificial subset (dark gray) are limited in the Autonomy direction by a boundary where several instances of mobile neurorobotic agents are located. The left wall of high autonomy is occupied by living systems displaying diverse levels of social complexity. This includes some unique species such as Physarum (+) that involves a single-celled individual.

The latter includes the presence of active mechanisms of niche construction, ecosystem engineering or technological skills. The corner occupied by plants (*) involves (for example, in a forest) small computational power, massive populations and an “extended mind” that needs to be understood in terms of their interconnectedness and active modification of their environments, particularly soils.

While ants in particular rival humans in their shear numbers and ecological dominance, the massive role played by externalized technology in humans makes them occupy a distinct, isolated domain in our cube (with both individuals and groups able to exploit EC).

In a recent paper entitled “Building machines that learn and think like people”, it has been argued that, for ANN to rapidly acquire generalization capacities through learning-to-learn, some important components are missing.

– One is to generate context and improve learning by building internal models of intuitive physics.

– Secondly, intuitive psychology is also proposed as a natural feature present since early childhood (children naturally distinguish living from inanimate objects) which could be obtained by introducing a number of Bayesian approximations.

– Finally, compositionality is added as a way to avoid combinatorial explosions.

In their review, Lake et al. discussed these improvements within the context of deep networks and problem-solving for video games, and thus considered the programming of primitives that enrich the internal degrees of freedom of the ANN. These components would expand the flexibility of deep nets towards comprehending causality. Lake et al. also pointed at several crucial elements that need to be incorporated, being language a prominent one. So far, despite groundbreaking advances in language processing, the computational counterparts of human language are very far from true language abilities. These improvements will without doubt create better imitations of thinking, but they are outside an embodied world where—we believe—true complex minds can emerge by evolution.

This table highlights the current chasm separating living brains from their computational counterparts. Each item in the non-human is intended to reflect a characteristic quality, which does not reflect the whole variability of this group (which is very broad). For the DANN and robotics columns, there is also large variability and our choice highlights the presence of the given property at least in one instance. As an example, the wiring of neural networks in neurobotic agents is very often feedforward, but the most interesting cases studies discussed here incorporate cortical-like, reentrant networks.

[ … ] the paths that lead to brains seem to exploit common, perhaps universal properties of a handful of design principles and are deeply limited by architectural and dynamical constraints. Is it possible to create artificial minds using completely different design principles, without threshold units, multilayer architectures or sensory systems such as those that we know? Since millions of years of evolution have led, through independent trajectories, to diverse brain architectures and yet not really different minds, we need to ask if the convergent designs are just accidents or perhaps the result of our constrained potential for engineering designs. Within the context of developmental constraints, the evolutionary biologist Pere Alberch wrote a landmark essay that can further illustrate our point. It was entitled “The Logic of Monsters” and presented compelling evidence that, even within the domain of teratologies, it is possible to perceive an underlying organization: far from a completely arbitrary universe of possibilities (Since failed embryos are not the subject of selection pressures, it can be argued that all kinds of

arbitrary morphological “solutions” could be observed), there is a deep order that allows to define a taxonomy of “anomalies”. Within our context, it would mean that the universe of alien minds might be also deeply limited.