“Algorithmic Idealism: What Should You Believe to Experience Next?”

I argue for an approach to the Foundations of Physics that puts the question in the title center stage, rather than asking “what is the case in the world?”. This approach, algorithmic idealism, attempts to give a mathematically rigorous in-principle-answer to this question both in the usual empirical regime of physics and in some more exotic regimes within cosmology, philosophy, and science fiction (but soon perhaps real) technology.

I begin by arguing that quantum theory, in its actual practice and in some interpretations, should be understood as telling an agent what they should expect to observe next (rather than what is the case), and that the difficulty of answering this former question from the usual “external” perspective is at the heart of persistent enigmas such as the Boltzmann brain problem, extended Wigner’s friend scenarios, Parfit’s teletransportation paradox, or our understanding of the simulation hypothesis.

Algorithmic idealism is a conceptual framework, based on two postulates that admit several possible mathematical formalizations, cast in the language of algorithmic information theory.

Here I give a non-technical description of this view and show how it dissolves the aforementioned enigmas: for example, it claims that you should never bet on being a Boltzmann brain regardless of how many there are, that shutting down computer simulations does not generally terminate its inhabitants, and it predicts the apparent embedding into an objective external world as an approximate description.

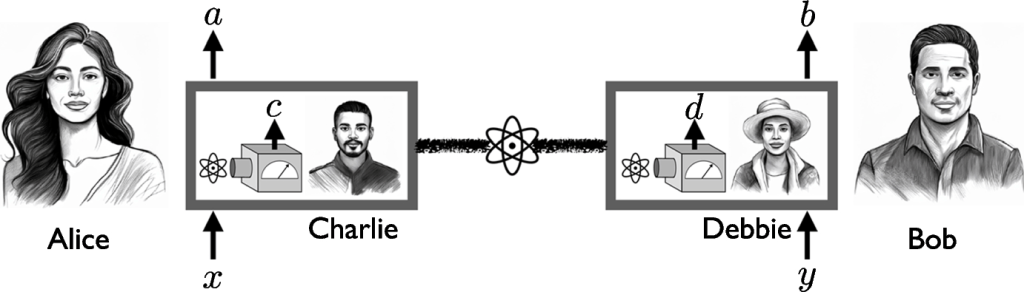

An entangled quantum state is shared between two locations. At one location, Charlie performs a fixed measurement on one of the two particles, obtaining outcome c. Alice, however, regards the box with the particle and Charlie as a closed quantum system, and decides to perform a measurement x on it, obtaining outcome a. (Note that x is chosen after c has been established.) In the special case of x=1, she asks Charlie directly for his outcome and outputs a=c. At the other location, we have two further observers, Debbie and Bob, who act analogously. If the externally observed statistics P(a, b|x, y) violates a so-called local friendliness inequality, then locality and no-superdeterminism imply that there cannot for all x, y exist a joint probability distribution P(a, b, c, d|x, y) that describes the observations of all agents

I have presented an approach to the Foundations of Physics, tentatively called algorithmic idealism, which declares the first-person perspective fundamental. Essentially, it is the question of “what will probably happen to me next?” that is taken to be fundamental, rather than the question of “what is the case in the world?”.

Despite its name, high-level and vague concepts such as agents, consciousness or belief do not play any fundamental role in algorithmic idealism, and its two postulates admit several possible rigorous mathematical formalizations, i.e. a class of concrete theories.

By construction, the predictions of algorithmic idealism agree with those of our physical theories in standard laboratory situations if a suitable physical version of the Church-Turing thesis is true. Indeed, algorithmic idealism is constructed in the spirit of empiricism: no insights of any kind into the ontology of the world are directly sought, and essentially all human preconceptions about metaphysics are demolished on the way (or, rather, declared to be only useful models that sometimes give approximately the right answers).

Rather than asking what the world is like, the sole goal of this approach is to get the phenomena right. In this sense, it intends to represent a minimal extension of the usual methodology of physics into new territory: from the regime of intersubjective experiments, where everybody can learn about the results, to private experiments for which a third-person view or prediction “from the outside” is in principle unavailable.

The mere possibility (even if considered unlikely) that some aspects of algorithmic idealism are closer to the truth than our standard view hints at a surprising lack of imagination in the way we look at some essential questions of humanity. Should humanity colonize the galaxy in order to make a large number of conscious lives possible that would not otherwise have existed? Arguments in favor of this typically implicitly assume a particular metaphysics of “one single real world among infinitely many possible ones” that has already been criticized by Lewis and that is rejected by algorithmic idealism. Does shutting down a computer simulation terminate its simulated conscious beings? This usually seems to be taken as obviously true, but algorithmic idealism claims that this is not generally the case, and that the answer will depend on how much information from the outside world is fed into the simulation. But if we do not understand what to say about the simulation of agents, how can we be so sure we understand death? The mere reason why we consider the view of transitioning into an infinite-duration unconscious “muted state” as the only scientifically plausible possibility may be that we have so far only seen alternatives promoting esoteric nonsense and religious wishful thinking, and that we want to signal how serious and trustworthy we are by not even addressing the question.

These examples also demonstrate that algorithmic idealism is not merely a theory of how agents can best predict their future observations, but a radically new approach to physical reality.

If it were simply some sort of theory of epistemology, then what will actually happen to an agent would be determined by the (in general unknown) physical world in which the agent would operate. In contrast, algorithmic idealism denies that the agent is fundamentally embedded into some world, and grounds whatever will happen to the agent on its self state alone. This counterintuitive starting point allows it to formulate a law which is meant to express how an agent’s fate is determined in mundane but also truly exotic situations, such as simulation and multiplication scenarios, and it makes very counterintuitive predictions (such as the possibility that agents may “fall out” of the world into which they are effectively approximately embedded, or the notion of probabilistic changelings). These predictions would not only be impossible to obtain in an epistemic approach (because, as we discussed via Restriction A, third-person claims about the world have nothing to say about first-person chances in, say, duplication scenarios), but they outright contradict the assumption of an agent that is embedded into some given but unknown world. This also indicates a clear separation from operational theories as they are often formulated in the quantum information theory context: while these theories also often feature agents and their observations (such as Alice and Bob in a communication scenario), these agents are assumed to be embedded into a joint physical system (external world) that mediates their communication and observations. In algorithmic idealism, on the other hand, this external world is not postulated a priori, but an emergent statistical phenomenon, and so is the intuitive idea that Alice and Bob share the same physical world.

In summary, algorithmic idealism was not constructed with the intention to say anything directly about reality. However, the methodological starting point to ignore the usual realist assumptions led to a formulation which indeed admitted novel predictions that are not otherwise available, but at the same time made it incompatible with the received view of physical reality, and thus distinct from existing epistemic or operational approaches.

There are two main open technical problems for algorithmic idealism, and these turn out to be related. First, the bit model of has to be replaced by a better mathematical formalization, and this is currently work in progress. Essentially, the bit model precludes the possibility of “forgetting”, which is unmotivated, unrealistic, and an artefact of the way that Solomonoff induction has been formulated in Algorithmic Information Theory.

Second, a successful formalization of algorithmic idealism should predict some phenomenal aspects of Quantum Theory. Clearly, Quantum Theory is one of the main motivations for algorithmic idealism. Moreover, if its probabilities of events are fundamentally agent-relative, then this already resembles a phenomenon that is sometimes considered genuinely quantum. It also hints at the possibility that the resulting description of an agent’s external world may have the feature that not all potential events fit into a joint Kolmogorovian probability distribution, a fundamental structural element of Quantum Theory which is necessary (though not sufficient) for many of its phenomena. The main goal should be to reconstruct phenomena that are genuinely quantum, such as the violation of Bell inequalities together with compliance with the no-signalling principle, or the possibility of performing BQP-complete computations in polynomial time. […] The main question is whether the statistical results of experiments are predicted to behave in genuinely nonclassical ways, and any claims of saying something about this must engage with the insights from research on the foundations of Quantum Theory. In particular, its ability (or inability) to predict aspects of Quantum Theory is an important opportunity to test algorithmic idealism, and this alone suggests that it would be methodologically wrong to build the quantum formalism into algorithmic idealism by construction.

There are several other elements of algorithmic idealism that need further elaboration. As a simple example, its reference to a “physical Church-Turing thesis” has to be further fleshed out: what exactly is assumed here about the computability of our physical world?

As an epilogue, let us finally return to the scenario of Section 1: what should we answer the patient’s desperate question, under the unlikely assumption that algorithmic idealism is true? Will it “wake up” in the computer simulation or not? Given that we do not know the patient’s self state, the details of the simulation, and that we do not even rely on a particular formalization of Postulates 1 and 2, we can only attempt some sort of “rule of thumb” answer based on general principles of algorithmic idealism: you are your self state, and there is no need to be realized in a material object in what you currently experience as your external world in order to be “awake”. So yes, perhaps you will experience the simulated environment. But then, the simulation should be algorithmically simple, or otherwise the transition will be unlikely — a complicated paradise-like environment tailored to your needs will probably not do the job. So, in the end, maybe you would not even have needed to spend your money, because the simulated world exists mathematically regardless of what AfterMath has been doing. At the very least, you do not have to pay AfterMath to prevent them from shutting it down later. But to say more, one would need a detailed mathematical model of algorithmic idealism and a lot more work.

The rule of thumb answer can hence perhaps be summarized in two words: be hopeful.