“Beyond thinking fast and slow: a Bayesian intuitionist model of clinical reasoning”

Clinical reasoning is a quintessential aspect of medical training and practice, and is a topic that has been studied and written about extensively over the past few decades.

However, the predominant conceptualisation of clinical reasoning has insofar been extrapolated from cognitive psychological theories that have been developed in other areas of human decision-making. Till date, the prevailing model of understanding clinical reasoning has remained as the dual process theory which views cognition as a dichotomous two-system construct, where intuitive thinking is fast, efficient, automatic but error-prone, and analytical thinking is slow, effortful, logical, deliberate and likely more accurate. Nonetheless, we find that the dual process model has significant flaws, not only in its fundamental construct validity, but also in its lack of practicality and applicability in naturistic clinical decision-making.

Instead, we herein offer an alternative Bayesian-centric, intuitionist approach to clinical reasoning that we believe is more representative of real-world clinical decision-making, and suggest pedagogical and practice-based strategies to optimise and strengthen clinical thinking in this model to improve its accuracy in actual practice.

From a historical perspective, the cognitive model of dichotomising human thought processes into separate pathways of intuition (“irrational thinking”) and reason (“rational thought”) actually originated in the early 1900s …, before it became formalised, developed and widely advocated by mainstream scholars and psychologists such as Kahneman, Epstein, Evans, Stanovich and Carruthers. Subsequently, in the medical arena, Croskerry and colleagues have directly applied the dual process model

to explain clinical reasoning and how errors in diagnosis and

clinical judgment occur. …

Over the years, the dual processmodel in cognitive and behavioral psychology has come under increasing scrutiny over its construct validity. The biggest issue highlighted, by far, has been the lack of empirical evidence that definitively proves two distinct cognitive systems. On the

contrary, there has been many varying propositions of what unique attributes clearly define the intuitive and analytical categories of cognition, with clear overlaps in many cases of supposedly distinguishing characteristics of each category. As such, it is suggested that a cognitive continuum model might be more accurate in describing cognitive patterns based on where it lies on a spectrum of various qualifying attributes such as judgment speed and resource dependence. In more recent

times, the development of a thermodynamically frugal “predictive brain model” in cognitive science describes a new understanding of human brains as proactive predictingmachines that generate hypotheses of what is likely to happen next based on matching of the earliest incoming information and signalswith previously stored knowledge/experiences. Therefore, an intuitive brain is a nonnegotiable starting point, where it automatically generates predictions (“hypotheses”) based on early incoming sensory signals that are quickly matched to known patterns

from previous knowledge/experiences. Then, it is selfregulated by in-built systems that flag up predictive errors (PEs) when subsequent cues deviate from expectations or suppress PE signals when expectancies are met. Subsequently, with each new experience, the mental model then recalibrates to improve predictive precision and accuracy.

… … …

Admittedly, while we argue that an intuition-based model of clinical reasoning is more naturistic and practical, there will inevitably be concerns that arise over its potential diagnostic inaccuracies due to reported associations with cognitive biases and errors. In fact, such concerns have led to significant exhortations in clinical reasoning literature to deliberately deploy analytical thought and de-biasing techniques to counter what are often erroneous, intuitive responses.

Firstly, concerns that clinical intuition is error-prone due to the proclivity for cognitive bias might be overstated. There have hitherto been several studies in clinical reasoning that have not shown elaborate analytical judgments to be necessarily more accurate than clinical intuitions. … Intuitions are not necessarily more error prone than analytical processes when there is knowledge or experiential deficit, and errors in intuitive judgment may not always be mediated by cognitive biases

Secondly, there are also specific situations where intuition can be superior to analytical judgments – that is, the concept of “ecological rationality”. In certain environments, especially complex and uncertain situations, intuitive or heuristics-based reasoning could be superior to analytical judgments by avoiding redundancy and the overfitting phenomenon.

Thirdly, intuition is more frugal and practical in clinical environments that have significant time and resource constraints. In such situations, choosing elaborate analytical thought processes due to perception that it leads to decision optimisation may ironically lead to an impasse or prolonged inaction with downstream repercussions such as failure to make timely decisions or taking time away from other important clinical tasks.

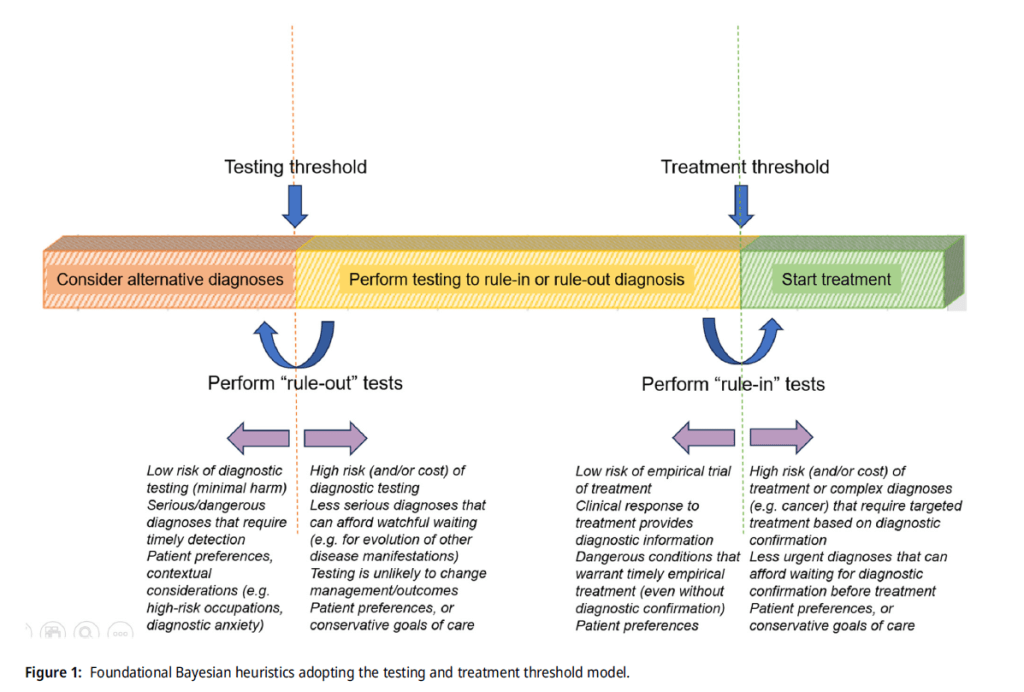

In this foundational Bayesian heuristic, there are three possible decision outcomes for every clinical case:

1) low pre-test probability that falls below testing threshold (where alternative diagnoses should be considered),

2) intermediate pre-test probability that falls between testing and treatment threshold (where “rule-out” tests are selected if it is low-intermediate probability, and “rule-in” tests are chosen if it is high-intermediate probability), and

3) high pre-test probability that crosses the treatment threshold favouring prompt initiation of empirical treatment.

… to further improve the accuracies of intuitive pre-test and post-test probabilities, prior medical training would be important to develop an understanding of base rate prevalence of disease in the local population, illness script-based learning of clinical conditions (understanding risk factors (“enabling conditions”), disease pathology (“fault”), and expected clinical and diagnostic findings (“consequences”)), evidence-based

understanding of predictive value of relevant clinical symptoms/signs and diagnostic tests, and iterative experiential learning through first-hand clinical exposure or second-hand case discussions by other colleagues.

Using a clinical predictive brain model of hypotheses generation and appraisal, we proposed several pedagogical and practice-based strategies

aimed at improving intuitive prediction accuracy and sensitivity to error signals.